“Unmasking the Threat: Defending Against AI-Powered Voice and Video Cloning Impersonations”

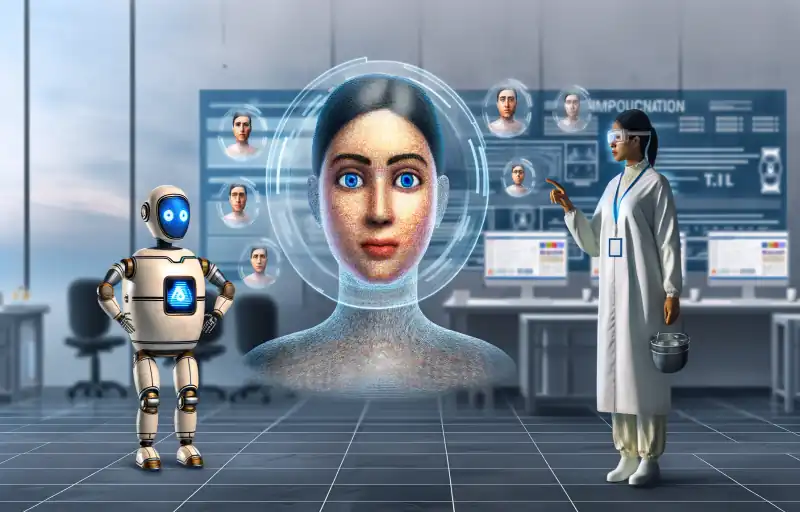

The Rise of AI-Powered Voice and Video Cloning: Impersonating Trusted Individuals

;

;

The rise of artificial intelligence (AI) has brought about numerous advancements in various fields, including voice and video cloning techniques. These techniques, powered by AI, have become increasingly popular among malicious actors who seek to impersonate trusted individuals. Whether it is family members, co-workers, or business partners, these malicious actors are using AI-powered voice and video cloning to deceive and manipulate unsuspecting victims.

Voice cloning, in particular, has become a significant concern in recent years. With the help of AI algorithms, malicious actors can now replicate someone’s voice with astonishing accuracy. This means that they can imitate the tone, pitch, and even the unique quirks of an individual’s speech pattern. By doing so, they can easily trick others into believing that they are speaking to someone they trust.

Imagine receiving a phone call from what appears to be your elderly mother, only to find out later that it was an AI-powered voice clone. These malicious actors can exploit the emotional vulnerability of individuals by impersonating loved ones and requesting financial assistance or sensitive information. The consequences can be devastating, both emotionally and financially.

Video cloning, on the other hand, allows malicious actors to manipulate video footage to make it appear as if someone else is speaking or acting in a certain way. By using AI algorithms, they can seamlessly superimpose someone’s face onto another person’s body, creating a convincing illusion. This technique has been used to create deepfake videos, which have gained notoriety for their ability to deceive even the most discerning eye.

The implications of AI-powered voice and video cloning are far-reaching. In the realm of cybersecurity, these techniques pose a significant threat to individuals and organizations alike. Malicious actors can use voice and video cloning to gain unauthorized access to sensitive information, bypass security measures, or even commit fraud. The potential for damage is immense, and it is crucial for individuals and businesses to be aware of this growing threat.

Detecting AI-powered voice and video cloning is a challenging task. Traditional methods of authentication, such as passwords or security questions, are no longer sufficient in the face of these advanced techniques. As AI continues to evolve, so too must our methods of defense. Fortunately, researchers and technology companies are working tirelessly to develop innovative solutions to combat this emerging threat.

One such solution is the development of AI-powered detection algorithms. By leveraging the power of AI, these algorithms can analyze voice and video recordings to identify signs of manipulation or cloning. They can detect anomalies in speech patterns, facial expressions, or even subtle inconsistencies in lighting and shadows. These algorithms have the potential to become powerful tools in the fight against AI-powered impersonation.

The rise of AI-powered voice and video cloning techniques has given malicious actors a powerful tool to impersonate trusted individuals. Whether it is through voice cloning or video manipulation, these techniques can deceive and manipulate unsuspecting victims. The implications for cybersecurity are significant, and it is crucial for individuals and organizations to stay vigilant. By developing innovative detection algorithms and staying informed about the latest advancements in AI, we can better protect ourselves from this growing threat.